As I mentioned in my last article, I found this particular reply intriguing and decided to work on it.

This series will document my thought process as I work on that idea.

If you just want to look at the latest version of the patch, you can use the lore url linked here.

As of the time of writing this article, the patch series is at v8.

The Problem

I found the original message very dense. This section is about breaking it down into digestible chunks.

RX filtering, i.e., dropping RX from untrusted sources is a core requirement of network devices. dev_set_rx_mode() is the abstraction in net/core that is responsible for programming RX filters, i.e., informing the hardware what sources to accept from.

This filtering happens at the MAC layer and is very different from firewalls or netfilter processing which happen after the frames have already been accepted.

Naturally, part of this programming is device specific. That’s implemented as the ndo_set_rx_mode callback which is eventually invoked by dev_set_rx_mode().

The code looks something like:

void __dev_set_rx_mode(struct net_device *dev)

{

const struct net_device_ops *ops = dev->netdev_ops;

/* Validation here */

if (ops->ndo_set_rx_mode)

ops->ndo_set_rx_mode(dev);

}

void dev_set_rx_mode(struct net_device *dev)

{

netif_addr_lock_bh(dev);

__dev_set_rx_mode(dev);

netif_addr_unlock_bh(dev);

}

where netif_addr_lock_bh() and netif_addr_unlock_bh() lock and unlock the spinlock dev->addr_list_lock which is needed to synchronize access to unicast/multicast address lists.

However, this means the I/O involved is performed while holding a spinlock — which is problematic because I/O would want to sleep. Drivers have tried to work around this limitation in a few ways.

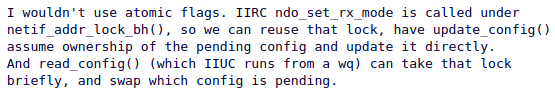

Some drivers make the I/O asynchronous, like the rtl8150 driver mentioned in the reply. This is a naive solution: it can lead to accumulating I/O requests faster than they can be executed. Obsolete backlogs are useless, as only the latest request matters.

A smarter approach is to perform the actual I/O in a work item, with the set_rx_mode callback simply scheduling the work. This prevents backlogs: scheduling the work either does nothing if it hasn’t started yet or reschedules it if it’s already running, ensuring nothing is missed. This works but then this puts a lot of strain on the reviewers since this is a lot of work to do per driver.

The correct solution (as suggested in the reply) is to build on this idea.

We don’t want to access the shared data during the I/O, as we are removing the spin lock. The best approach is to take a snapshot of the RX mode config when dev_set_rx_mode() is invoked. We then use this snapshot to confirm the rx mode request and perform the I/O.

With this understanding, here is my first attempt at implementing this idea.

Prerequisites

Honestly, I had a hard time figuring out where to start. From the reply, it was clear that workqueues are paramount. So let’s start there.

Workqueues:

Since I did not know what workqueues were, It was time to read the docs.

In brief,

- a work item is a wrapper around a function that is to be executed later. This function is called a work handler.

- Workqueues are queues of work items

- An independent thread (called the worker) serves as the execution context for the functions described by the work items.

- While there are work items on the workqueue the worker executes the functions associated with the work items one after the other.

- functions executing in the workqueue context can sleep

That helps but that doesn’t explain how we can use it. This is where driver code becomes useful.

I decided that the lan78xx driver would be a good starting point since that used a work item in the ndo_set_rx_mode callback.

By tracing the usages of set_multicast (the work item) in the driver code, I deduced that the correct steps for using the workqueue API are:

1) Define a struct work_item x

2) Call INIT_WORK(&x, fun) to initialize x with the

function fun. fun is now the work handler for x

3) Call schedule_work(&x) to queue the work on the global

workqueue (and hence actually run it)

4) Cancel the work using cancel_work_sync(&x)

when you want to cancel fun`s execution

We could define and use a dedicated workqueue but as of now the global workqueue seems good enough for this refactor.

Network Device Operations and the Core/Driver split

I haven’t yet explained what a NDO is, so this section fills that gap.

Every mechanism in the networking stack that interacts with hardware is split into two parts: generic logic and device-specific logic.

- The generic part is implemented in net/core and defines the mechanism. This code is shared across all network devices.

- The device-specific part is implemented by the corresponding drivers as callbacks. These callbacks define how the mechanism is carried out on a particular device.

These callbacks are grouped together in a structure called struct net_device_ops. The generic logic invokes the appropriate callback when it needs the device-specific logic.

For example, consider the driver rtl8150.

static const struct net_device_ops rtl8150_netdev_ops = {

.ndo_open = rtl8150_open,

.ndo_stop = rtl8150_close,

.ndo_siocdevprivate = rtl8150_siocdevprivate,

.ndo_start_xmit = rtl8150_start_xmit,

.ndo_tx_timeout = rtl8150_tx_timeout,

.ndo_set_rx_mode = rtl8150_set_multicast,

.ndo_set_mac_address = rtl8150_set_mac_address,

.ndo_validate_addr = eth_validate_addr,

};

This net_device_ops structure is assigned to a struct net_device dev as:

dev->netdev_ops = &rtl8150_netdev_ops;

Later, when dev_set_rx_mode() is called on the same dev,

it eventually invokes the ndo_set_rx_mode callback:

if (ops->ndo_set_rx_mode)

ops->ndo_set_rx_mode(dev);

For this particular network device, dev->netdev_ops->ndo_set_rx_mode points to rtl8150_set_multicast. In other words, the above call is effectively equivalent to:

rtl8150_set_multicast(dev);

In short, the generic dev_set_rx_mode() code effectively calls the driver’s rtl8150_set_multicast() function for this device.

Design V1

Well, the start is still difficult. Let’s start with things we do know:

- There is a new work item

- There has to be a new NDO

The Work

I decided to name the new work item config_write. (The names get better in later versions.)

Incorporating the new NDO to this model was not straightforward to say the least. My first thought was have the NDO be the work handler.

That didn’t look good for a couple of reasons. Most importantly, the signature would be completely wrong for a work item. A function executed by a work item must have this form:

void (name)(struct work_struct *work);

Hmm, What if we define a function of this signature and call our new NDO inside it? This allows the work handler to perform validation or extra logic without changing the NDO itself. I decided to name this execute_write_rx_config()

The Snapshot

Next, I had to decide what the snapshot should look like. The thing I came up with was a massive blunder but is still worth describing.

Since the config represents data to be written to hardware registers, it can be represented as a bounded char array (finite registers, finite size). Because the size depends on the driver, this array should live in the driver’s private data.

As to how this will be used:

- The config will be populated by the

set_rx_modeNDO - The config will be written to the hardware by the

write_rx_configNDO

To support this, I added two macros: update_snapshot and read_snapshot along with a spin lock config_lock for synchronization. These will be used like this:

update_snapshotwill be called at end of theset_rx_modecallback andread_snapshotwill be called at the beginning of thewrite_rx_configcallback

I then added a wrapper struct rx_config_work and included a member rx_work of this type in struct net_device:

struct rx_config_work {

struct work_struct config_write;

struct net_device *dev;

spinlock_t config_lock;

};

The pointer to dev is necessary to reference the enclosing net_device. This is a very common kernel pattern.

The flow looks something like this:

// in alloc_netdev_mqs()

INIT_WORK(&dev->rx_work->config_write, execute_write_rx_config);

// in dev_set_rx_mode()

schedule_work(&dev->rx_work->config_write);

// in free_netdev()

cancel_work_sync(&dev->rx_work->config_write);

This design was implemented in v1, v2 (was supposed to fix the build errors in v1 but wasn’t even a valid patch series) and v3 (which fixed that and added an RFT tag).

Post-mortem of V1

Why this aged like milk

I dropped the ball pretty hard :/

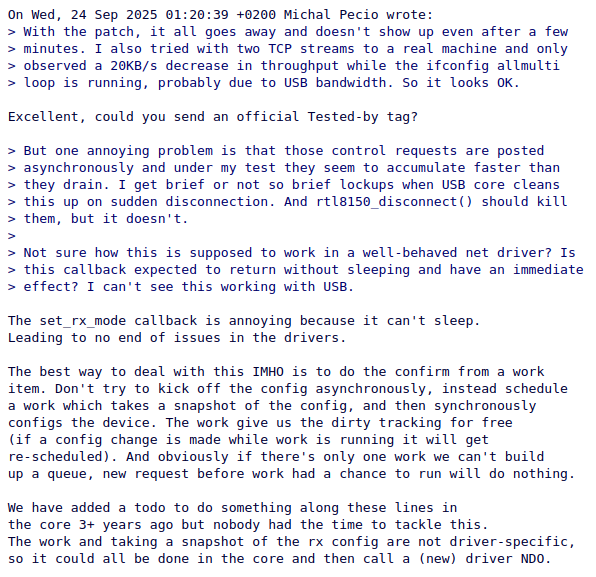

Jakub’s response points out the biggest problem with this approach.

In short, implementing V1 would have been extremely complex and error-prone — it’s a lot of work to decide what should go into the config for each driver.

This design was just bad. However, the fact that Jakub took the time to respond meaningfully suggested that something could be salvaged.

Salvaging V1

The work part was pretty solid and it was the snapshot part that was the problem. That’s what needed fixing.

With that in mind, I came up with this idea for what the config should look like

struct netif_rx_config {

char *uc_addrs; // of size uc_count * dev->addr_len

char *mc_addrs; // of size mc_count * dev->addr_len

int uc_count;

int mc_count;

bool multi_en, promisc_en, vlan_en;

void *device_specific_config;

}

And this pseudocode for update_config() and read_config()

atomic_t cfg_in_use = ATOMIC_INIT(false);

atomic_t cfg_update_pending = ATOMIC_INIT(false);

struct netif_rx_config *active, *staged;

void update_config()

{

int was_config_pending = atomic_xchg(&cfg_update_pending, false);

// If prepare_config fails, it leaves staged untouched

// So, we check for and apply if pending update

int rc = prepare_config(&staged);

if (rc && !was_config_pending)

return;

if (atomic_read(&cfg_in_use)) {

atomic_set(&cfg_update_pending, true);

return;

}

swap(active, staged);

}

void read_config()

{

atomic_set(&cfg_in_use, true);

do_io(active);

atomic_set(&cfg_in_use, false);

// To account for the edge case where update_config() is called

// during the execution of read_config() and there are no subsequent

// calls to update_config()

if (atomic_xchg(&cfg_update_pending, false))

swap(active, staged);

}

The idea is still to update the snapshot in the set_rx_mode callback and consume it in the write_rx_config callback. What’s new is the explicit config prep step and more structure in general.

update_config prepares a new snapshot and publishes it only if the current snapshot is not being consumed by read_config.

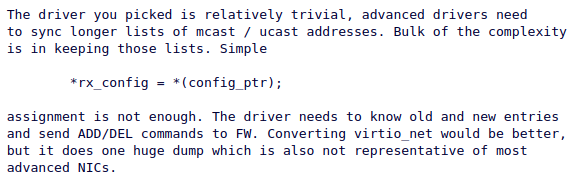

This was unnecessarily complicated and Jakub helped me simplify it

Here’s the simplified version:

// These variables will be part of dev->netif_rx_config_ctx in the final code

bool pending_cfg_ready = false;

struct netif_rx_config *ready, *pending;

void update_config()

{

WARN_ONCE(!spin_is_locked(&dev->addr_list_lock),

"netif_update_rx_config() called without netif_addr_lock_bh()\n");

int rc = netif_prepare_rx_config(&pending);

if (rc)

return;

pending_cfg_ready = true;

}

void read_config()

{

// We could introduce a new lock for this but

// reusing the addr lock works well enough

netif_addr_lock_bh();

// There's no point continuing if the pending config

// is not ready

if(!pending_cfg_ready) {

netif_addr_unlock_bh();

return;

}

swap(ready, pending);

pending_cfg_ready = false;

netif_addr_unlock_bh();

do_io(ready);

}

I decided to base Design V2 on this simplified pseudocode. I will explain the psuedocode there.

Closing Thoughts

Design V1 failed because it was too theoretical and didn’t anticipate emergent complexity. The review process made this clear and helped steer the design in a more practical direction.

In Part 2, I will cover Design V2, along with the associated lifetime and memory-management concerns.

Thanks for reading.